Researchers at the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) and the University of Toronto have developed a virtual home "VirtualHome" that not only allows virtual robots to do housework successfully, but also creates a database of home tasks described in natural language that may help in the future. Systems such as Amazon's Alexa perform more complex tasks.

"Robot, give me a bottle of Nongfu Spring for 82 years."

Upon receiving this instruction, the robot must be aggressive.

Not to mention that Nongfu Spring in 82 years will certainly not be able to find it. The more realistic problem is that even if there is a bottle of ready-made Nongfu Spring, robots need clear and procedural instructions from humans to complete this task. They cannot easily infer and reason. .

Researchers at the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) and the University of Toronto were inspired by The Sims to develop a virtual home "VirtualHome" that allowed not only virtual robots to successfully make coffee and open toasters Resting on the couch, the researchers also created a database of home tasks described in natural language that may help Amazon's Alexa and other systems perform more complex tasks in the future.

VirtualHome: Simulates 1000 Interactions in Home Eight Scenes

VirtualHome is a 3D environment that allows you to simulate and generate live video as a sequence of actions and interactions.

VirtualHome is based on three major modules:

A knowledge base for home tasks, including instructions for how to perform certain common tasks; a VirtualHome environment, a 3D simulator for simulating and generating videos of these tasks, and a script generation model that allows generating programs from descriptions or video presentations.

The team used nearly 3,000 different activity programs to train the system. These activities were further subdivided into computer sub-tasks to understand. This is because robots are different from humans in that they require more explicit instructions to accomplish simple tasks and cannot be easily inferred and reasoned.

For example, one person may tell another person: "Turn on the TV and watch it on the couch." In this sentence, actions such as "receiving the remote control" and "sitting/lying on the couch" are omitted. Because they are part of human common sense.

In order to better demonstrate such tasks to robots, it is necessary to describe the operation in more detail.

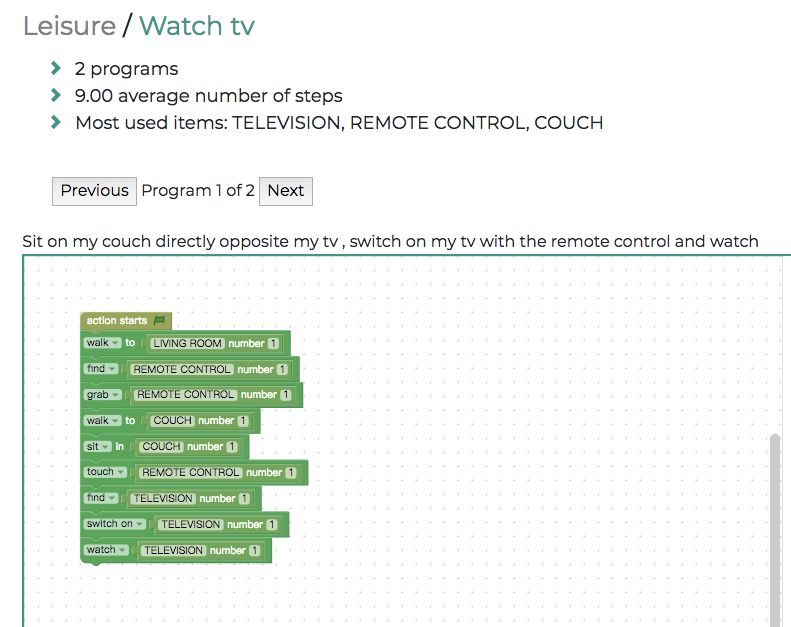

Only watch television this task, divided into multiple steps

To this end, the research team first collected verbal descriptions of family activities and then translated them into simple codes. Instructions such as "Turn on the TV and watch it on the couch" may include the following steps:

Go to the TV, turn on the TV, walk to the sofa, sit on the sofa and watch TV.

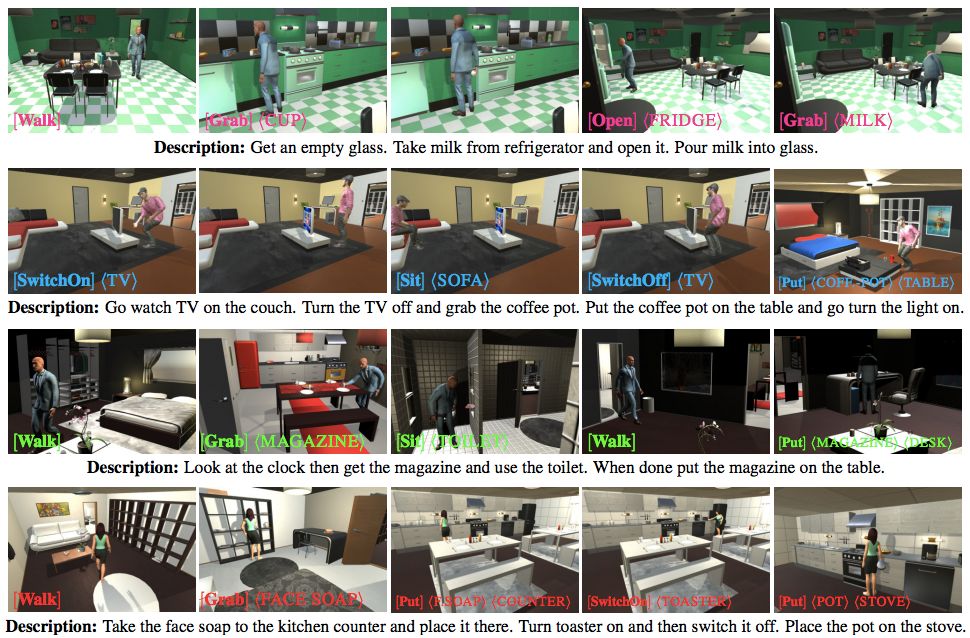

Once these programs are created, the team enters them into the VirtualHome 3-D simulator and converts them into video. The virtual agent will perform the tasks defined by the program, whether these tasks are watching TV, putting the pot on the stove, or Turn the toaster on and off.

The team's virtual robots can perform 1,000 such interactions in the VirtualHome world, including eight different scenarios, including the living room, kitchen, dining room, bedroom, and home office.

The uniqueness of the program: contains all the steps needed to perform the activity

Take a look at how it works.

The team collected a large knowledge base dedicated to the robot's family activities. The data set contains the activities and the natural language description of the program. The formal symbols of the activities are represented in a series of steps. The uniqueness of these programs is that they contain all the steps needed to perform the activities.

Each task has a high-level name and a natural language instruction. Then the team collects “programs†for these tasks (lower left in the figure below). The annotators “translate†the instructions into simple code.

The team then performs the most frequent (internal) operations in the VirtualHome-3D emulator and can drive agents to perform program-defined tasks. The team proposed ways to automatically generate programs from the text (top of the image above) and the video (bottom of the image above) to drive agents through language and video demonstrations.

The figure above describes that in VirtualHome, the agent executes the generated program according to the description. Note that the top agent uses his left hand to open the refrigerator and grab an item because he has already taken an object with his right hand. In addition, the agent has some restrictions. For example, in the third row, the agent wears clothes to sit on the toilet. In addition, sometimes the carried items may penetrate the body of the agent slightly due to the inaccuracy of the collider.

Future: Robots may get rid of tasks written by manufacturers and can also learn from YouTube

The project was jointly developed by researchers from universities such as CSAIL and the University of Toronto and will be presented at the CVPR conference in Salt Lake City this month.

Qiao Wang, a research assistant at Arizona State University’s Department of Art Media and Engineering, said: “This work will help future real robot personal assistants. Robots can learn tasks by listening or observing specific people around them instead of writing by the manufacturer. Each of these tasks allows the robot to complete its tasks in a personalized way, and even one day can invoke emotional connections through this personalized learning process.â€

In addition, the result of the research is not only to complete a system for training robots to do housework, but also to be a large database of home tasks described using natural language. Companies like Amazon are struggling to develop Alexa-like robotic systems at home and eventually use the data to train their models to perform more complex tasks.

In the future, the team hopes to use actual video to train robots instead of analog video in the style of "The Sims", which will enable robots to learn by watching YouTube videos. The team is also committed to implementing a reward learning system that allows agents to receive positive feedback when they perform tasks correctly.

UV Screen Protector,UV Curing Machine,UV Curing Screen Protector

Shenzhen Jianjiantong Technology Co., Ltd. , https://www.jjtscreenprotector.com